森がNICTサイバーセキュリティシンポジウム2026において「AIとセキュリティの将来課題」の招待講演をしました。

森がNICTサイバーセキュリティシンポジウム2026において「AIとセキュリティの将来課題」の招待講演をしました。

森がJNSA設立25周年記念イベント講演会において「AIとセキュリティ」の招待講演をしました。

We are thrilled to announce that our poster entitled “Why Do Non-English Languages Exhibit Higher Vulnerability to Data Poisoning Attacks Against Text-to-Image Models?” has been accepted for publication in NDSS 2026 Poster Session. Congratulations to Kakebayashi-kun and kudos to the entire team!

Ryohei Kakebayashi and Tatsuya Mori. "Poster: Why Do Non-English Languages Exhibit Higher Vulnerability to Data Poisoning Attacks Against Text-to-Image Models?" NDSS 2026 Poster Session (#68), San Diego, CA, USA, Feb 2026.

森がSCAIS2026において「フィジカルAIセキュリティ:オフェンシブセキュリティ研究の現在地と展望」の招待講演をしました。

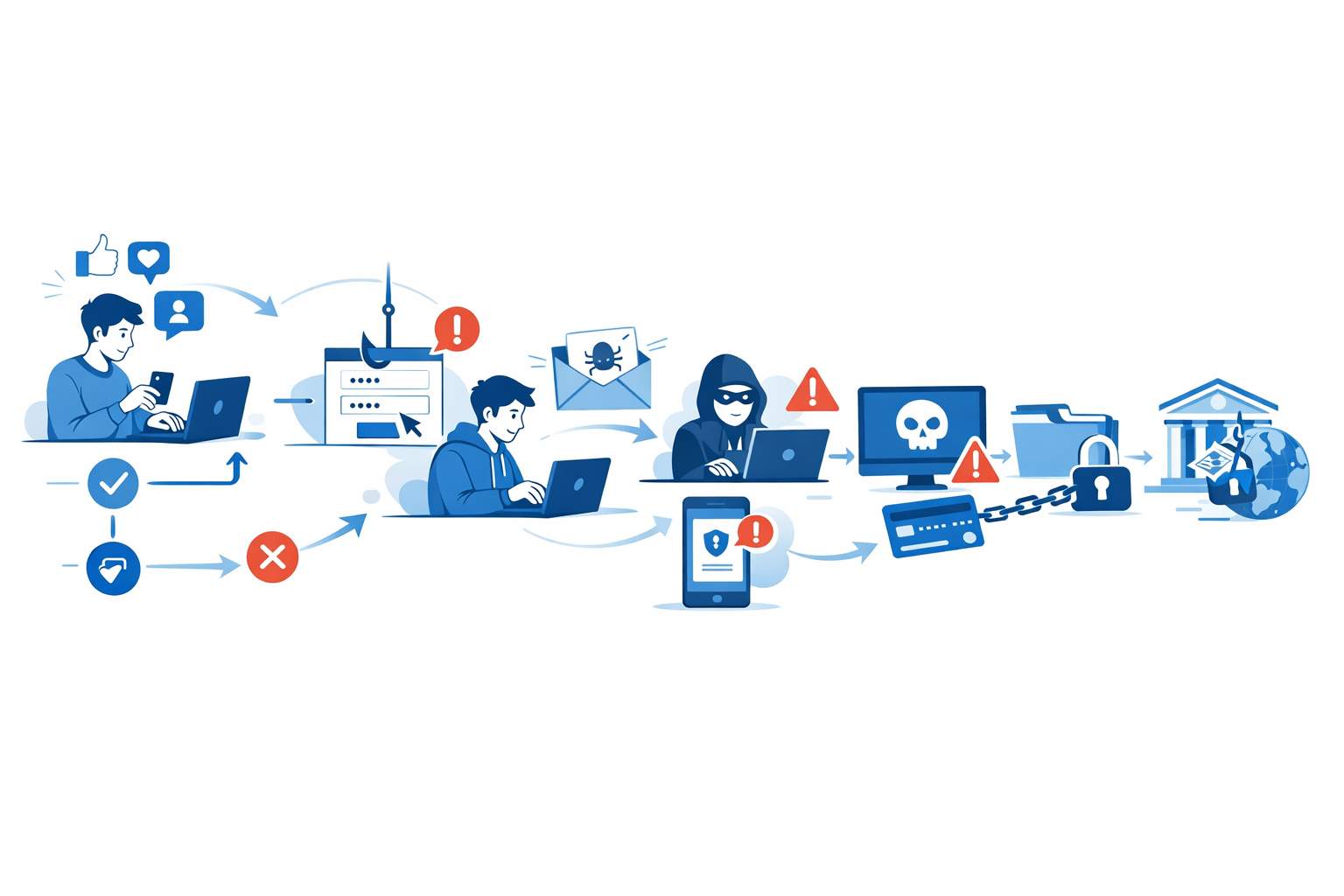

We are thrilled to announce that our paper entitled “Negative Aspects of Social Triggers for Security and Privacy: Chains of Risky User Behaviors” has been published in Journal of Information Processing (IPSJ). Congratulations to Moore-kun and kudos to the entire team!

Lachlan Moore, Tatsuya Mori, and Ayako A. Hasegawa. “Negative Aspects of Social Triggers for Security and Privacy: Chains of Risky User Behaviors.” Journal of Information Processing, Vol. 66, No. 12 (Dec 2025).